A history of point to point digital microwave radio systems

This article first appeared in the Telecommunications Heritage Journal, Issue Number 83, Summer 2013 and is reproduced with the permission of the Telecommunications Heritage Group.

This article presents my perspective on the evolution of point to point digital microwave radio systems over the last quarter of a century. I started working with these systems, which for the sake of brevity I will call microwave radios, during the mid 1980s. Digital microwave radios were very new at the time, most microwave radio systems in use were analogue.

.jpg)

Early systems were designed for free standing deployment in a communications room with long lengths of waveguide used to connect the microwave radio to a horn or parabolic antenna, commonly known as a dish antenna or simply ‘dish’. As a result of this particular configuration, the microwave radios tended to be lower in frequency (a relative term of course in microwave engineering), typically below 12GHz and often 6 or 4GHz. Examples of such deployments include the original UK trunk network deployed by BT and, some years later, the Mercury Communication national figure of eight network (11GHz). These networks are no longer in service as long distance transmission is now carried on optical fibre systems, this shift to fibre for trunk networks has recently been highlighted by the removal of the large microwave horn antennas from the BT tower in London. Despite this, the market for microwave radio is larger than ever as systems have shifted focus to access rather than trunk applications, of which I will say more further in to this article.

Microwave radio link design

While I will not provide a tutorial on microwave link planning within this article (please email me if you’re interested in such information), I believe it will be beneficial to provide a brief overview of the main parameters and planning considerations. With a little research you will find many definitions of the ‘microwave frequency band’, traditionally frequencies from 1GHz to 30GHz have been known as microwave however one could argue it starts lower, at 300MHz. Equally we’re working with bands above 30GHz, more correctly known as millimetre wave bands, but still generally called microwave. Best to check the definition in use with specific frequency references if it’s important, however common terminology generally refers to anything between 1GHz and 100GHz as microwave.

With such a wide range of frequencies included in the microwave spectrum, we find there is a corresponding wide range of propagation characteristics. Destructive interference from multi-path propagation (caused by phenomena such as atmospheric ducting) influences lower bands, while the dominant fading mechanism for frequencies above approx. 18GHz is rainfall, quite a common occurrence in the UK and elsewhere. To allow for these fading mechanisms we must include a safety factor (or fade margin) when calculating the power budget to ensure microwave radio links meet their target availability, typically expressed as uptime of 99.99%, 99.995% or 99.999%. The higher this figure the greater the required fade margin for a given link configuration, this has a knock on effect on the practical link design parameters such as frequency band, transmit power, modulation scheme, transmit channel bandwidth, and antenna size. It should also be noted that free space path loss, the attenuation a signal suffers as it passes through the air, increases with frequency, so a longer link length can be achieved in a lower frequency band for a given configuration.

Microwave radio equipment configuration

So, that’s a very little bit of useful theory, a useful source for additional information is [1]. Now onto digital microwave heritage.

.jpg)

As higher frequency systems were developed it became obvious that large all indoor solutions would not be possible since the attenuation within the waveguide increases significantly with frequency. For example, a metre of 7.5GHz waveguide has an attenuation of 0.06dB whilst a metre of 23GHz waveguide has an attenuation of 0.27dB. Given typical waveguide lengths of 20 to 50 metres from equipment shelter to antenna location, it is simple to foresee the problems that would occur given the limited transmit power (typically +18 to +30dBm depending upon band and application), along with fixed receiver sensitivity for a given channel bandwidth and modulation scheme.

.jpg)

The solution to this challenge was to split the microwave radio system into an indoor unit (IDU) containing the baseband and modem, connected to an outdoor unit (ODU) containing the microwave transceiver with frequency up-conversion and power amplification in the transmit path, and low noise amp and down converter on the receive side. The interconnection between this indoor unit and outdoor unit uses an intermediate frequency signal, typically 10s to 100s of MHz that can be carried over coaxial cable which is much cheaper to purchase and install than waveguide and which has no impact on link power budget. Such split mount systems offer greater flexibility as the outdoor unit can be mounted at the base of the tower with a shorter length of waveguide to the antenna (compared with waveguide run back in to the equipment shelter), it could be mounted on the tower behind the antenna or, as is increasingly common, directly mounted to the back of the antenna as an integrated system. Antennas themselves have evolved too, from horns to parabolic dishes with ever increasing amounts of sophistication and performance.

PDH, SDH, Hybrid and an evolution to all Packet radio

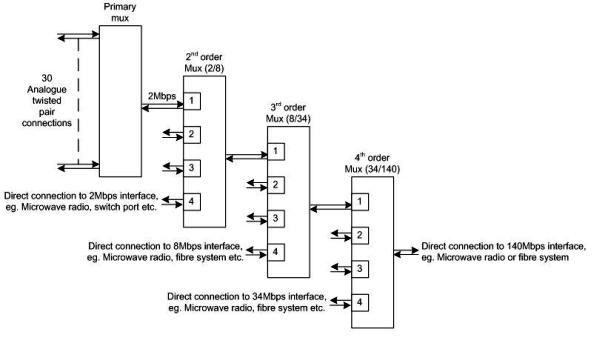

Early digital microwave radio systems implemented the Plesiochronous Digital Hierarchy (PDH). Systems operated with PDH inputs/outputs, or tributaries - often shortened to tribs - of 2Mbps, 8Mbps, 34Mbps or 140Mbps (these are rounded figures). These data rates aligned with typical telecommunications transmission networks. We can carry 30 traditional 64kbps voice calls over a 2Mbps circuit. An 8Mbps circuit could carry 4 x 2Mbps signals, hence 120 voice channels and 34Mbps could carry up to 480 voice calls. The highest PDH rate officially standardised by the International Telecommunications Union (ITU) is 140Mbps, capable of carrying 1920 consecutive voice calls. To access the radio system a single interface would be provided at the PDH line rate, so circuits had to be aggregated up to that rate via a PDH mux (multiplexer). At this time most circuits were connected to end systems (such as System X telephone exchanges or remote concentrators) at the 2Mbps level. PDH multiplexing followed the strict hierarchy, therefore a chain of multiplexers would be connected together in what came to be known as the “mux mountain” as illustrated in figure 4.

Starting on the left of figure 4, we have several options for connectivity to the E1 ports of the second order mux. These include the output of a primary mux containing up to 30 voice channels (with channel associated signals or common channel signalling) or a mix of voice and data, or a direct 2Mbps interface from a microwave radio or a digital switch port.

With increasing deployment of access microwave radio systems, the concept of the skip-mux evolved. This resulted in an n x 2Mbps microwave radio with ‘n’ between 1 and 16. Over time this evolved to today’s systems supporting up to 48 x 2Mbps tribs. The adoption of this solution, effectively incorporating the mux functionality within the radio IDU, has reduced the cost and greatly increased the flexibility of deploying point to point digital microwave radio systems.

The deployment of cellular networks, from analogue systems through 2G GSM and more recently 3G UMTS, has driven significant deployment of access microwave radio. Mobile operators looking for cost optimised backhaul between their cellular base stations and core sites, deploy microwave radio systems as an alternative to leasing 2Mbps circuits from fixed network providers. There are now tens of thousands of microwave radio systems deployed in the UK.

As telecoms transmission systems evolved to even higher capacity, the Synchronous Digital Hierarchy (SDH) was standardised by the European telecommunications regulator during the late 1980s, with commercial equipment available from the early 1990s. SDH introduced data rates from 155Mbps to 2.5Gbps (and higher rates have been introduced since), only the lowest SDH data rate of 155Mbps was appropriate for implementation on microwave radios at the time. The 155Mbps rate, known as Synchronous Transfer Module level 1 (STM-1) required the use of higher order modulation schemes to realise 155Mbps in a 28MHz radio channel.

The evolution of microwave radio systems continues, with significant progress made over the last decade. Modern systems support parallel TDM and Ethernet transmission and more recently, all packet based systems have been introduced. This evolution is aligned with the general telecoms shift from TDM to all-IP networking. Higher order modulation schemes and new frequency bands along with improvements in antenna technology ensure microwave radio systems are as relevant today as they were when I started working with this technology, a little over 25 years ago . . .

Reference

[1] Microwave Radio Transmission Design Guide (second edition) by Trevor Manning

Follow on social media .....